Acoustic Music Similarity Analysis

Motivated by a desire for better music recommendations, I built a fully automatic approach for recommending music based on “how it sounds,” using musical characteristics like instrumentation, tempo, and mood. The goal for this system is to give completely objective recommendations, instead of relying on collaborative filtering (i.e. “Listeners Also Bought…” on iTunes) which is easily skewed by popular songs and fails to account for new music.

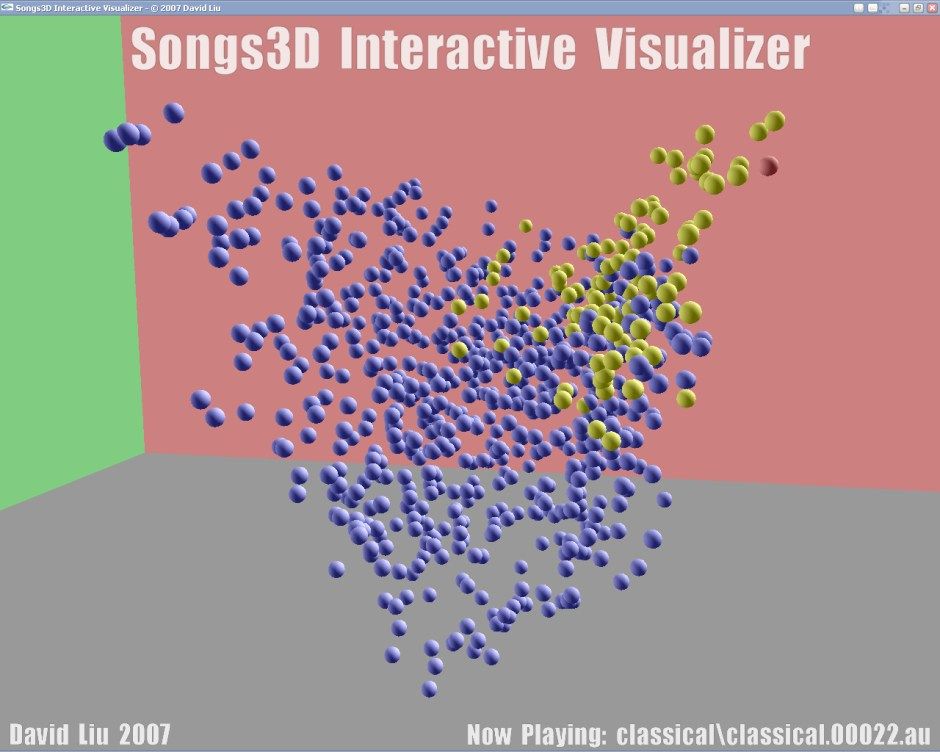

I wrote software to extract the frequency distributions of songs, run feature analysis, and arrange the songs in a space where similar songs are closer together.

3D music browser using audio similarity. Songs displayed as bubbles in space. Similar songs are placed together: the highlighted songs are all classical music.

I competed with this project at the Intel ISEF, California State Science Fair, and Synopsys Silicon Valley Science Fair. Here’s a video from Intel of my live demo at ISEF 2007.

Live demo at Intel ISEF 2007

Tech Specs: The most successful technique I found was extracting MFCC features from short windows, clustering them into a feature histogram, and using the Earth Mover’s Distance to compare these signatures. Then, drawing on techniques from spectral clustering, I arranged the songs in a transformed feature space. This was a novel approach that separated songs into distinct groups, and produced significantly better retrieval results as measured by accuracy in genre matching.