Semantic Image Analysis and Image Exploration

Intel Science Talent Search 2010 interview

Each day, we’re creating millions of family photos, medical images, and scientific images. This makes it increasingly difficult to find what we want. I built a system to search images automatically by understanding their meaning. Just as humans describe pictures using concepts such as “trees” and “buildings,” my research links visual cues with these concepts to improve search accuracy. I created a novel image browser that interactively reveals “clusters” of similar images better than any previous method. Finally, I applied my algorithm to detect threats to buried oil pipeline automatically, by finding unauthorized digging equipment in aerial images.

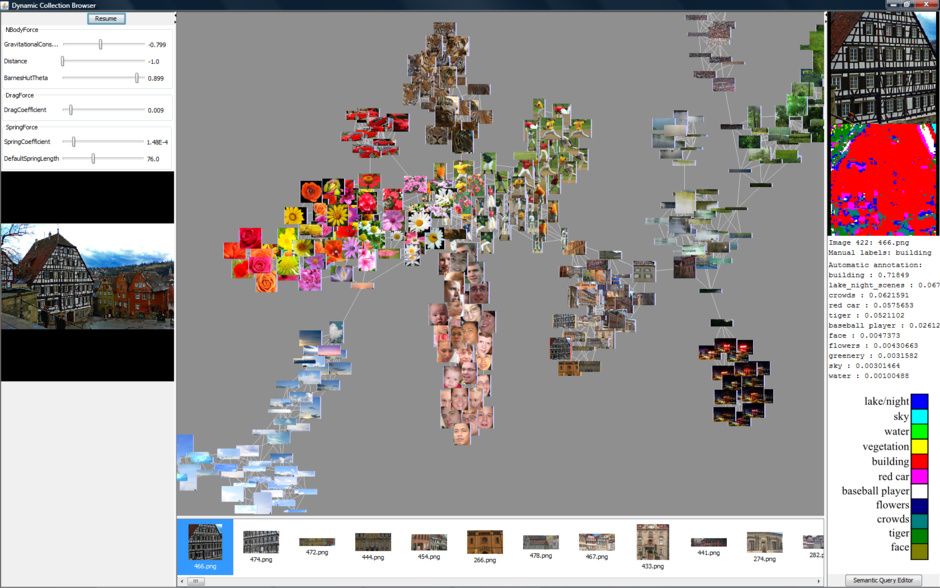

Dynamic image browser clusters photos by similarity. Concepts such as “sky,” “flowers,” or “buildings” are automatically grouped by the spring graph (center). Clicking an image produces a list of the most similar images (bottom). Each part of the selected image is automatically analyzed and color-coded based on the concept (right).

In order to visualize large image collections, I created an interactive exploration system. It uses a spring graph to cluster images, revealing a distinct semantic structure not visible with previous visualization methods. To my knowledge, this was the first use of spring graphs for image browsing. My system solves a common challenge in visualization: exploration of large, high-dimensional data sets in a low-dimensional display.

See the demo in this video from GeekDad, from when I was in Washington, D.C. presenting this demo on the exhibition floor of the Science Talent Search.

Demo at Science Talent Search exhibition

Application to Oil Pipeline Threat Detection

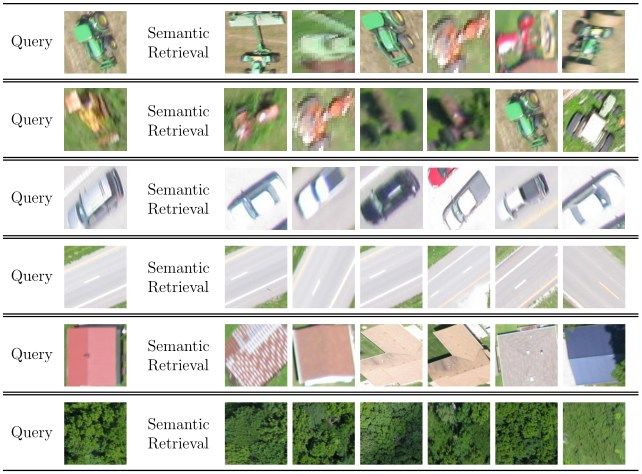

I also applied my semantic retrieval system to search aerial images for threats to buried oil pipeline. It classified aerial image patches, detecting digging equipment with high accuracy. These results indicate that semantic image annotation holds promise for aerial image analysis. Eventually, unmanned aerial vehicles could use this technology in real-time.

Semantic image queries on data from aerial imagery.

I competed with this project at several competitions, including the Intel Science Talent Search, Intel International Science and Engineering Fair, California State Science Fair, and Junior Science and Humanities Symposium.

Tech Specs: I built an image retrieval system that creates probabilistic models of semantic concepts. My latest system learns a Gaussian Mixture Model for each concept. These models are created with expectation-maximization on a 27-dimensional feature space: color is represented by YCbCr channels for perceptual uniformity, and textures are represented by the responses to 24 Gabor filters.